In 1996, I completed an honours year attached to my Comp. Sci. This involved a thesis, in which I choose to investigate a certain optimization technique in computer graphics. The impressive title of my thesis was: Space-time ray-tracing using ray-classification. I occasionally get the odd email about it, and the ftp server at Macquarie Uni that housed my paper, code and animations is now gone, so I decided it was about time to do a little data dump of my honours project.

The General Idea

Ray-tracing is a neat and elegant algorithm for producing photo-realistic images. Think about what happens when a camera takes a picture in real life: various light sources (the Sun, light globes, etc) bath the scene in photons, which bounce around as they are absorbed and re-emmited by objects. Some of these photons eventually end up passing through the lens of the camera and strike the film. You could easily imagine an algorithm that simulated this by tracing these rays of light forward from light-sources. The problem is that a vast majority would never hit the camera, and you end up tracing rays that never contribute to the scene.

Backward ray-tracing (or just “ray-tracing”) does the opposite: it traces rays out of the camera, bouncing rays backwards through the scene to see if they hit any light sources. This bouncing of rays allows many real-world visual artifacts to be modeled: transparency/refraction (bounce rays through objects), reflection (bounce rays along a perfect angle), diffusion (bounce rays along many angles), shadows (rays bounced towards lights are instead occluded by intervening objects).

There are various models and heuristics for bouncing rays backwards off objects. The real problem is calculating which object a ray hits. The brute force approach is to test for intersection of the ray with every object in the scene, then pick the intersection that occurred closed to the root of the ray. It is easy to see that this will be the bottleneck of the algorithm. Much of the research into ray-tracing has gone into improving the search for the object with which a ray first intersects.

The Specific Idea

My honours thesis concerned a technique for speeding up the ray-intersection calculation that also took into account time (animated scenes). If you really want to know the details you can read the paper, but the general gist goes like this: a ray is defined by 5 parameters, its (x, y, z) origin, and two angles that describe its direction. This means each ray exists as a point in a 5D space. Add time into the equation, and you end up with a 6D space. Subdivide this 6D space, and associate with each cell all the object that might intersect rays from that cell. This reduces the number of objects you need to consider when doing a ray-intersection test.

That might sound complicated, but it is that tried and true technique: convert your problem into a mathematical space and adaptively subdivide the space to reduce the work you need to do.

The Thesis

The (original) PostScript version of my thesis is available here: thesis.ps.gz (384KB). I have since converted it to PDF, which should be clearer to read on screen: thesis.pdf (348KB). An entry for this paper can be found in CiteSeer here: http://citeseer.ist.psu.edu/quail96space.html.

The abstract is as follows:

Throughout the history of computer graphics, a very active area of research has been the attempt to create convincing, life-like images. Ray tracing is a technique for generating photorealistic images, and can easily support a wide range of natural phenomenon. However, producing a ray traced image is very expensive; and producing a ray traced animation is often prohibitively expensive.

This thesis looks at two techniques for efficiently producing ray traced animations; Glassner’s space time hierarchical bounding volume technique, and my space time extension of Arvo and Kirk’s ray classification. The results show that my method is more efficient; however Glassner’s technique has much better memory performance. Based on some of the ideas of my method, some ways of improving Glassner’s technique are suggested.

57 pages.

The Code

I shudder at the thought of making this code public, but here it is: src.tgz. I wrote that code a long time ago, long before I’d been introduced to the concept of common coding conventions. I’ve made a few tweaks to the original source so that it at least compiles without errors on a modern C++ compiler.

The code contains an API for defining the scene to be rendered. So it doesn’t take in a “scene description” text file, you actually define the scene with some C++ code. This works quite elegantly, as the API is designed so that your scene description looks mostly declarative anyway.

Because the scene definition needs to be compiled into the program, you can’t just “run” the ray-tracer. So there is a little rend shell script to mange this process. For example, you can render the “mount” animation like so:

./rend out.txt strcom.cc test/mount.cc

Statistics about the rendering are saved to out.txt. The ray/object intersection algorithm is plugable, strcom.cc is my space-time ray-classifier. There is also a naive (brute force) algorithm, and an implementation of Glassner’s hybrid SS/HVB technique (for comparison). You will want to do a make clean if you change scenes.

The Animations

I created three animations for testing the performance of the ray-tracer. The output of the ray-tracer was a series of TGA files, one for each frame. At the time, I converted these to FLI files (loss-less). I have since converted the FLI files to MOV (Quicktime, lossy). Both are included below.

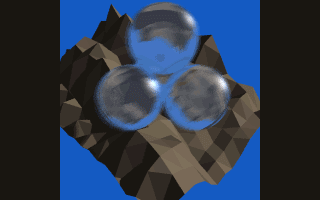

This is a short animation of 3 crystal balls doing a donut above a randomly generated “mountain”. Technically, this tests the ray-tracer’s ability to handle animated transparency.

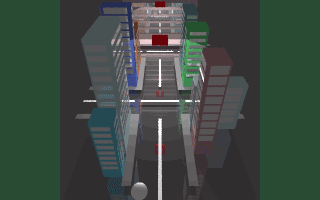

“Night City” is my favorite; it is the most complicated (in terms of shapes and movement), and is cinematically the most interesting. It depicts traffic in some kind of “particle city”. Lights above illuminate the roads, and the cross-lines on the intersections control the traffic. The camera in the animation follows one “particle” as it makes its way towards a building.

“Night City” was not that difficult to create because objects can be created procedurally. For example, each building could be created with a call like create_building(floors, width, length, colour). The hardest part was modeling the path of the camera as a mathematical function. The goal of this animation was to test both camera movement and object movement.

This last animation is designed to emphasize the “space-time” notion: the motion of objects through time. The motion-blur should be evident.

The End

I look back on my honours year with fondness, but at the same time it was a lot of hard work. I remember making a mental note to think very, very hard before embarking on something like that again (such as a Ph.D.).